Technical Content

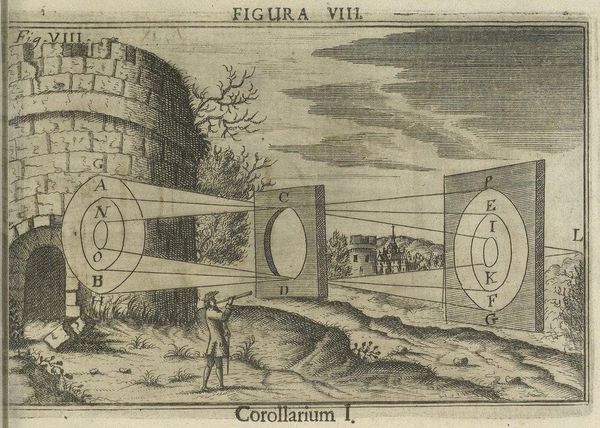

Use the Otari Gateway with OpenCode

AI coding sessions can feel like a black box. Route OpenCode through the Otari Gateway to track costs, token usage, and model activity in real time. Get budget controls and visibility across every session without changing a single line of application code.